Sept 9, 2012

|

| Ada Poon, assistant professor of electrical engineering, led the research. (Photo: Linda A. Cicero / Stanford News Service) |

Stanford electrical engineers overturn existing models to demonstrate the feasibility of a millimeter-sized, wirelessly powered cardiac device. The findings, say the researchers, could dramatically alter the scale of medical devices implanted in the human body.

A team of engineers at Stanford has demonstrated the feasibility of a super-small, implantable cardiac device that gets its power not from batteries but from radio waves transmitted from a small power device on the surface of the body.

The implanted device is contained in a cube just 0.8 millimeter on a side. It could fit on the head of pin.

The findings were published in the journal Applied Physics Letters. In their paper, the researchers demonstrated wireless power transfer to a millimeter-sized device implanted 5 centimeters inside the chest on the surface of the heart – a depth once thought out of reach for wireless power transmission.

The engineers say the research is a major step toward a day when all implants are driven wirelessly. Beyond the heart, they believe such devices might include swallowable endoscopes – so-called "pillcams" that travel the digestive tract – permanent pacemakers and precision brain stimulators – virtually any medical applications where device size and power matter.

A revolution in the body

Implantable medical devices in the human body have revolutionized medicine. Hundreds of thousands if not millions of pacemakers, cochlear implants and drug pumps are today helping patients live relatively normal lives, but these devices are not without engineering challenges.

First, they require power, which means batteries, and batteries are bulky. In a device like a pacemaker, the battery alone accounts for as much as half the volume of the device. Second, batteries have finite lives. New surgery is needed when they wane.

"Wireless power solves both challenges," said Ada Poon, assistant professor of electrical engineering, who headed up the research. She was assisted by Sanghoek Kim and John Ho, both doctoral candidates in her lab.

Last year, Poon made headlines when she demonstrated a wirelessly powered, self-propelled device capable of swimming through the bloodstream. To get there she needed to overturn some long-held assumptions about delivery of wireless power through the human body.

Her latest device works by a combination of inductive and radiative transmission of power. Both are types of electromagnetic transfer in which a transmitter sends radio waves to a coil of wire inside the body. The radio waves produce an electrical current in the coil sufficient to operate a small device.

There is an indirect relationship between the frequency of the transmitted radio waves and the size of the receiving antenna. That is, to deliver a desired level of power, lower frequency waves require bigger coils. Higher frequency waves can work with smaller coils.

"For implantable medical devices, therefore, the goal is a high-frequency transmitter and a small receiver, but there is one big hurdle," Kim said.

Ignoring consensus

Existing mathematical models have held that high-frequency radio waves do not penetrate far enough into human tissue, necessitating the use of low-frequency transmitters and large antennas – too large to be practical for implantable devices.

Ignoring the consensus, Poon proved the models wrong. Human tissues dissipate electric fields quickly, it is true, but radio waves can travel in a different way – as alternating waves of electric and magnetic fields. With the correct equations in hand, she discovered that high-frequency signals travel much deeper than anyone suspected.

"In fact, to achieve greater power efficiency, it is actually advantageous that human tissue is a very poor electrical conductor," said Kim. "If it were a good conductor, it would absorb energy, create heating and prevent sufficient power from reaching the implant."

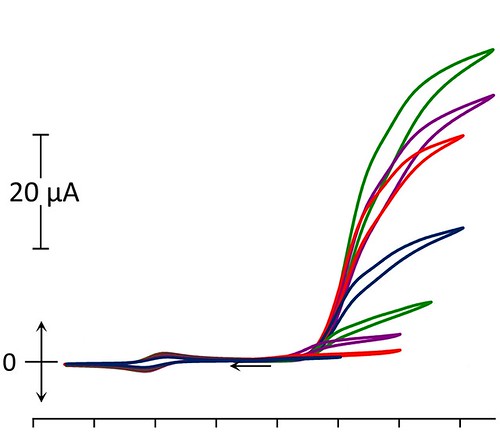

According to their revised models, the researchers found that the maximum power transfer through human tissue occurs at about 1.7 billion cycles per second, much higher than previously thought.

"In this high-frequency range, we can increase power transfer by about 10 times over earlier devices," said Ho, who honed the mathematical models.

The discovery meant that the team could shrink the receiving antenna by a factor of 10 as well, to a scale that makes wireless implantable devices feasible. At the optimal frequency, a millimeter-radius coil is capable of harvesting more than 50 microwatts of power, well in excess of the needs of a recently demonstrated 8-microwatt pacemaker.

Engineering challenges

With the dimensional challenges solved, the team found itself bound by other engineering constraints. First, electronic medical devices must meet stringent health standards established by IEEE (Institute of Electrical and Electronics Engineers), particularly with regard to tissue heating. Second, the team found that the receiving and transmitting antennas had to be optimally oriented to achieve maximum efficiency. Differences in alignment of just a few degrees could produce troubling drops in power.

"This can't happen medical devices," said Poon. "As the human heart and body are in constant motion, solving this issue was critical to the success of our research." The team responded by designing an innovative slotted transmitting antenna structure. It delivers consistent power efficiency regardless of orientation of the two antennas.

The new design serves additionally to focus the radio waves precisely at the point inside the body where the implanted device rests on the surface of the heart – increasing the electric field where it is needed most, but canceling it elsewhere. This helps reduce overall tissue heating to levels well within the IEEE standards. Poon has applied for a patent on the antenna structure.

This research was made possible by funding from the C2S2 Focus Center, one of six research centers funded under the Focus Center Research Program, a Semiconductor Research Corporation entity. Lisa Chen also contributed to this study.

Source: Stanford University